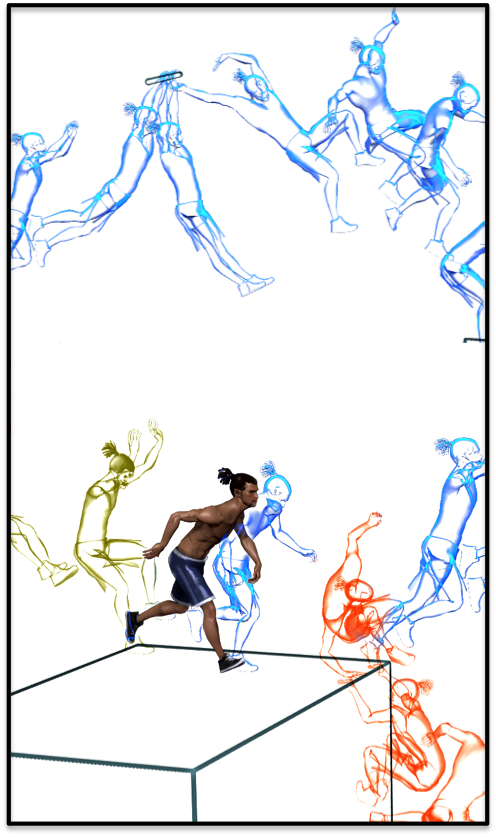

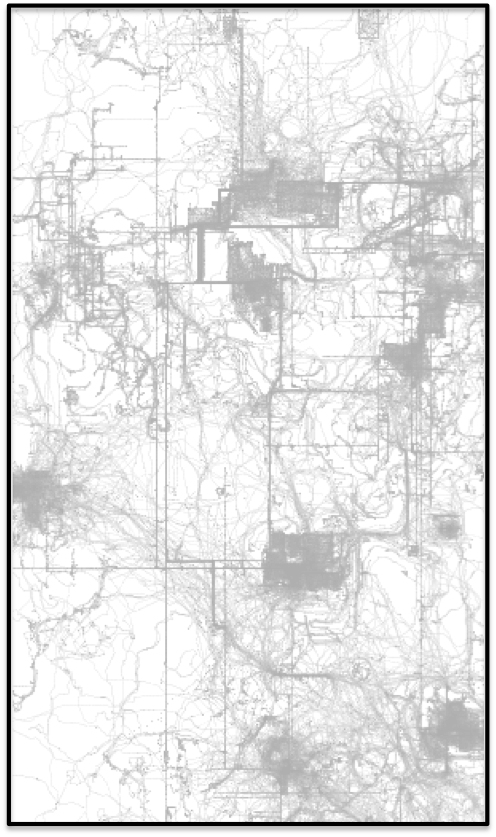

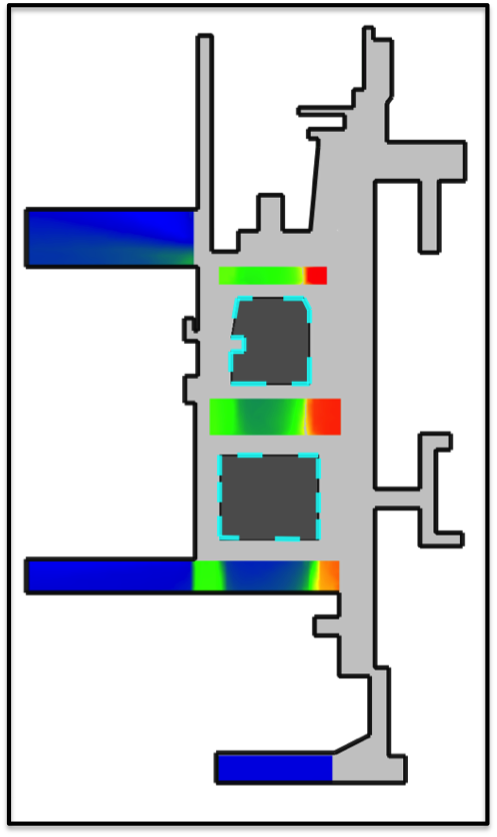

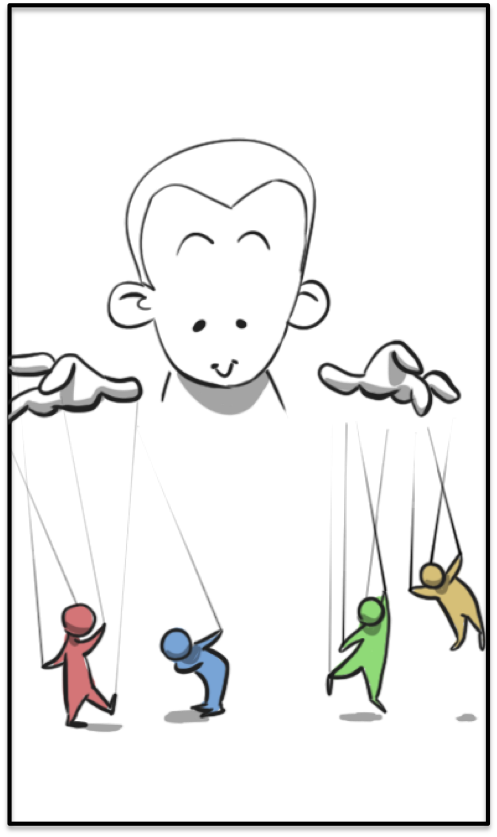

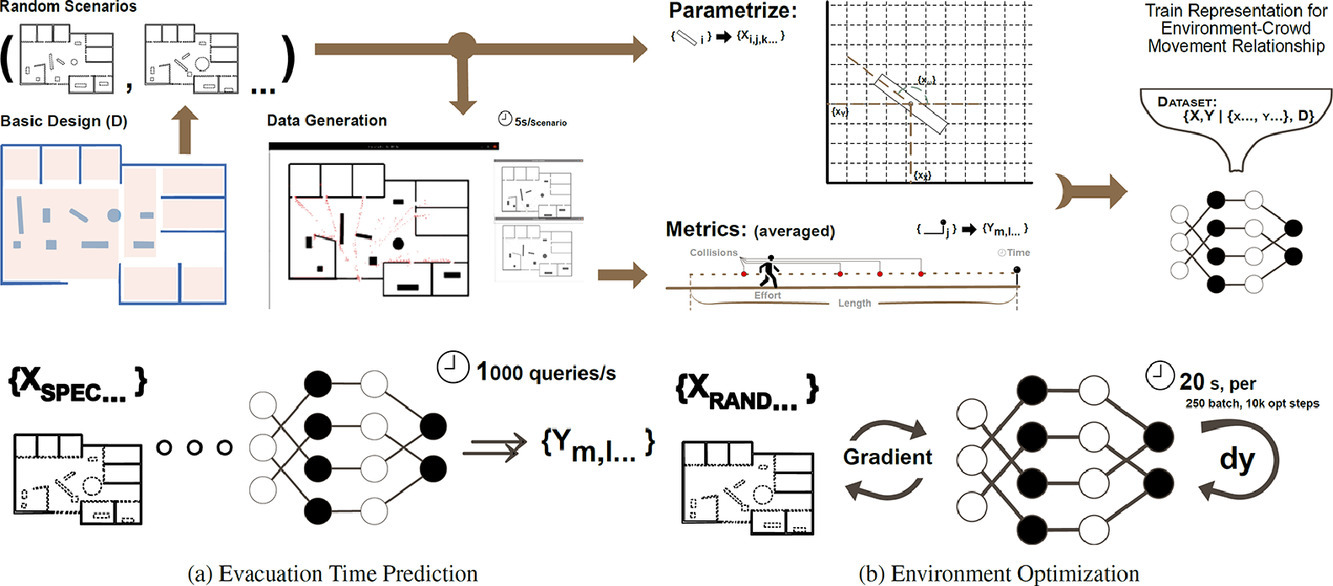

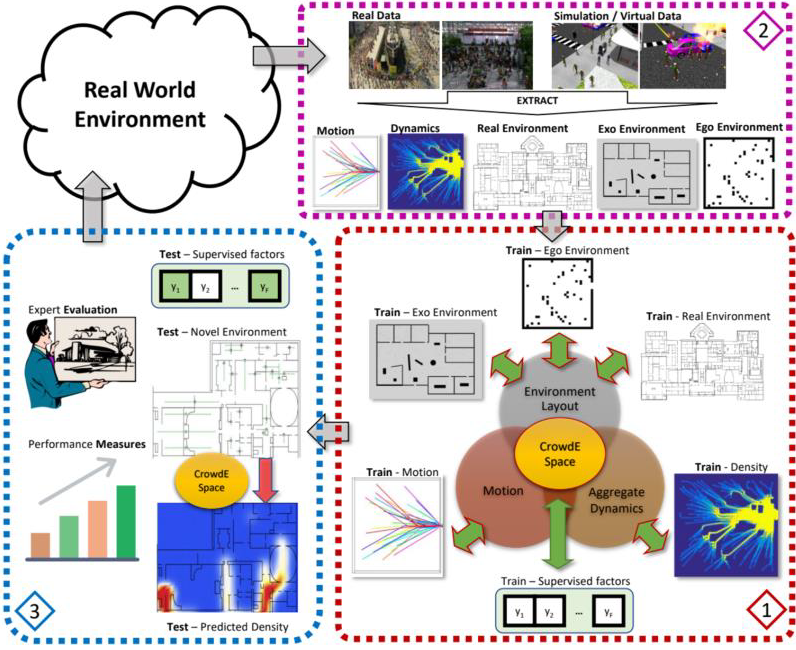

NSF funded project on Learning Joint Crowd-Space Embeddings for Cross-Modal Crowd Behavior Prediction

We have received funding NSF for our project, Learning Joint Crowd-Space Embeddings for Cross-Modal Crowd Behavior Prediction. Team: Vladimir Pavlovic (PI), Mubbasir Kapadia (Co-PI), Sejong